Current focus

Podcast 01

Loading audio...

Capstone Project · SUNY Polytechnic Institute IDT · 2024-25

Teaching Mandarin with a Robot That Thinks

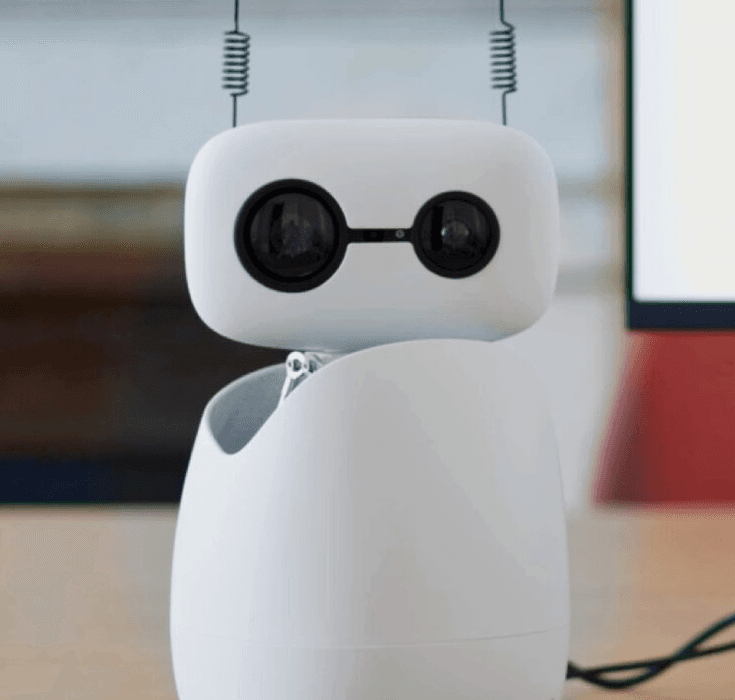

As a graduate researcher in the SUNY Polytechnic Institute IDT program and AIXLab, backed by a New York State grant, I designed and built an embodied AI language tutoring system for Mandarin Chinese on a Reachy Mini desktop humanoid. The project asks whether a physical robot tutor can create a more effective learning experience than a screen-based agent by combining voice interaction, agentic lesson orchestration, and embodied social presence.

Challenge

Language pedagogy and robotics rarely share the same design space, so the core challenge was turning second-language acquisition theory, human-robot interaction research, and real hardware constraints into one coherent tutoring system. I had to manage latency across speech recognition, LLM calls, gesture control, and audio feedback while also handling Mandarin tone recognition, face-directed gaze, and limited onboard compute.

Outcome

The result is a working Research through Design prototype: an embodied Mandarin tutoring system with an agentic AI pipeline, expert review from a Mandarin teacher, and four simulation-based learner evaluations in MuJoCo. It demonstrates that embodied AI can operationalize instructional ideas like comprehensible input, corrective feedback, and scaffolding in a desktop robot format, with a clearly scoped next step toward formal efficacy testing.

01

Discovery

I mapped second-language acquisition theory, human-robot interaction literature, and Reachy Mini platform constraints into a single research design that could support a credible capstone investigation.

02

Build

I built an agentic tutoring pipeline that listens, generates pedagogically sequenced Mandarin prompts, evaluates learner responses, adapts difficulty in real time, and coordinates voice with robot gesture and gaze.

03

Launch

Instead of live human-subject testing, I evaluated the system through four simulated learner personas in MuJoCo and grounded the instructional logic through expert consultation with a Mandarin teacher before submitting the capstone as a technical design portfolio.

Notes

When I joined the Instructional Design and Technology program at SUNY Polytechnic Institute, I was drawn to the edges of the field, the places where learning theory bumps into engineering. Enrolling in the AIXLab, and securing a New York State grant to fund the work, gave me the runway to pursue something genuinely novel: an embodied AI system for second-language instruction.

The project centers on a deceptively simple question: can a physical robot tutor Mandarin Chinese more effectively than a screen-based agent? The hypothesis draws on Human-Robot Interaction research, which consistently shows that embodiment, a body that gestures, turns, and occupies shared space, activates different attentional and social mechanisms in learners than text or voice alone. For tonal language acquisition like Mandarin, where prosody and mouth shape carry meaning, that physical presence may matter more than for other languages.

"Embodiment activates social mechanisms in learners that a screen simply cannot replicate, and for tonal languages, that difference may be decisive."

The platform I chose is the Reachy Mini, a compact desktop humanoid from Pollen Robotics with a four-microphone array, a 5W speaker, and a full Python SDK. It is small enough to sit on a student's desk, expressive enough to carry a conversational lesson, and open enough to integrate a full AI stack. Within it runs an agentic workflow: a speech recognition module pipes audio to a large language model that dynamically generates pedagogically sequenced Mandarin prompts, evaluates pronunciation attempts, adjusts difficulty in real time, and drives the robot's gestures and gaze without a rigid script. The loop closes on every utterance.

The technical constraints shaped every design decision. Onboard compute is limited, so latency management between the LLM API calls, audio pipeline, and motor commands required careful orchestration. Tone recognition for Mandarin, four lexical tones plus a neutral, demands audio preprocessing that consumer microphones handle poorly. The four-mic array helps, but the signal chain still required custom filtering. Localising the robot's head gaze to a learner's face in real time added a computer-vision dependency that had to be balanced against the processing budget.

Because the project is framed as a Research through Design investigation, a methodology that treats the prototype itself as a knowledge claim, human subjects testing was deliberately excluded from scope. Instead, I designed four detailed learner personas covering a range of Mandarin proficiency levels and learning styles, then ran simulation-based evaluations in the Reachy Mini's MuJoCo environment to stress-test timing, dialogue branching, and error-recovery behavior. A Mandarin-speaking teacher consultant reviewed the instructional logic, vocabulary sequencing, and feedback quality, grounding the pedagogical claims without requiring IRB review.

What this capstone ultimately demonstrates is not just a working prototype, but a design argument: that agentic AI, thoughtfully orchestrated inside an embodied platform, can instantiate second-language acquisition principles such as comprehensible input, corrective feedback, and interactional scaffolding in a form factor that travels to the learner's desk. The next step, now clearly scoped, is a formal efficacy study. The machine is ready to teach.

Q&A

What is this project?

It is a SUNY Polytechnic Institute IDT capstone project exploring an embodied AI Mandarin tutor built on Reachy Mini through AIXLab with support from a New York State grant.

Why use a robot instead of a screen-based tutor?

The project tests whether embodiment changes learner attention, engagement, and social interaction in ways that may improve language learning, especially for Mandarin where tone, rhythm, and physical presence matter.

What does the system actually do?

The robot listens to the learner, sends speech through an AI pipeline, generates Mandarin teaching prompts, evaluates responses, adjusts difficulty, and coordinates spoken feedback with gesture and gaze.

How was it evaluated without human subjects?

I created four learner personas and tested the tutoring flow in MuJoCo simulation, then used expert consultation with a Mandarin teacher to review sequencing, feedback quality, and instructional soundness.

What makes the workflow agentic?

The system is not a fixed script. It dynamically sequences prompts, responds to learner input, adapts lesson difficulty, and orchestrates multiple components across speech, language generation, and robot behavior.

What comes next?

The next step is a formal efficacy study to test how well the embodied tutoring approach performs with real learners in practice.